Failure is inherent to innovation and can yield valuable lessons. But how can leaders reduce more costly failures in familiar territory?

Celebrating failures in Silicon Valley and beyond has become downright fashionable. The essential idea – that entrepreneurship, innovation and scientific discovery are only possible if you’re willing to endure the occasional (or, in some cases, frequent) failure along the way – is indisputable. But ongoing enthusiasm for fast failure in management circles makes it easy to overlook the other side of failing well: it’s just as important to prevent failures as it is to promote them.

How to make sense of this apparently nonsensical claim? It starts with clarity about the variety of phenomena encompassed by the word failure. Drawing on my research in organizations over the past 30 years, I’ve identified three types of failure in my recent book, Right Kind of Wrong. Only one of these types warrants enthusiasm. Called intelligent failure by Duke professor Sim Sitkin, back in 1992, this is a source of discovery and advances in every field and in every organization.

Failing well in new territory

Intelligent failure refers to the undesired result of a thoughtful experiment in new territory. This type of failure inspires slogans like “fail fast; fail often”, along with the rest of the failure-happy talk that has become prevalent in the study of management. Enthusiasm for intelligent failure makes sense. No innovation department will last long without plenty of intelligent failures. This is the fun part of failing well.

When answers are not known in advance, you must experiment to make progress. As Amazon founder and then-CEO Jeff Bezos wrote in 2015, “To invent you have to experiment, and if you know in advance that it’s going to work, it’s not an experiment.” Every organization is at risk of stagnation unless it’s willing to face some failures along the way. If your goal is innovation and you never fail, you’re simply not doing your job.

A growing number of organizations encourage smart risk-taking by celebrating the intelligent failures that employees experience (and, importantly, report) when pursuing an innovative course of action to further the company’s goals. One factor that makes these failures intelligent is that their instigators had good reason to believe they might work. They had a goal, were exploring new territory, and had done their homework. They had considered what was already known, and kept the risk as small as possible – criteria that define a failure as intelligent. Yes, they failed, but it was not for lack of trying to succeed.

But what about failures caused by sloppy work, by reinventing the wheel, or by not correcting small problems or defects in the execution of well-understood tasks? A failure in familiar territory, where knowledge about how to get a desired result is already established, is not a cause for celebration.

The rest of the failure landscape

A second type of failure is caused by a single mistake or deviation from prescribed practices. I call this basic failure. Basic failures range from trivial (a burnt batch of cookies), to tragic (a fatal car accident caused by a driver texting). Unlike intelligent failure, basic failures are theoretically and practically preventable. And well-run organizations aspire to do what it takes to prevent them.

A third type – complex failures – have not one but multiple causes, each of which, on its own, would not trigger failure. An unfortunate combination of factors leads to a breakdown. Again, this can be large (a Boeing 737 Max’s fatal crash) or small (you miss an important meeting when traffic compounds your late departure, after you set your alarm to p.m. not a.m., and your gas tank is almost empty). As I explore in Right Kind of Wrong, many complex failures can be prevented – or at the very least, their effects can be mitigated – when small problems or deviations are noticed, reported, and corrected quickly.

Neither basic nor complex failures produce surprising new innovations, disruptive business models, or scientific advances. They bring no new information and are often painfully wasteful. It was a basic failure when Citibank accidentally wired a group of lenders the principal – $900 million – rather than the interest (a tiny fraction of that amount) in August 2020. Employees had mistakenly checked the wrong box on a digital payment form. (To compound the error, a judge later issued a controversial ‘finders keepers’ ruling, making the failure very costly indeed.)

What does it take to prevent basic and complex failures in an organization? How can you engage people at all levels in noticing and solving problems to prevent quality defects from reaching customers? How can you encourage the fearless pursuit of excellence in familiar territory, while also encouraging the smart risks that allow improvement and innovation? That’s the underappreciated side of failing well.

Preventing failures in familiar territory

Excellence in familiar territory starts not with assuming perfection – the default – but instead with assuming that errors and deviations will happen. It requires a mindset that accepts human fallibility as a given, and recognizes organizational complexity as a risk factor. This is a mindset that approaches the world as it is – not as you wish it were. But it is not a gloomy outlook: it gives rise to a cheerful vigilance about detecting problems, along with an eagerness to solve them as quickly as possible.

There’s no better place to see how such a system can work than at Toyota, which has long illustrated the extraordinary value that vigilance in familiar territory can produce. Toyota instills a learning mindset that makes the relentless discipline of adhering to learning practices not only possible but, over time, even natural. Widely emulated, Toyota’s principles have helped other organizations – like Seattle’s Virginia Mason Medical Center – to commit to building practices and cultures where errors can be caught and corrected, so consequential failures can be prevented.

Failure prevention as a learning endeavor

Problems are viewed as opportunities for learning to encourage people to catch errors and to design experiments that support improvements. As John Shook wrote in his 2008 book Managing to Learn: “[Toyota’s] way of thinking about problems and learning from them…is one of the secrets of the company’s success.” Such thinking must be supported by processes that identify, reframe and result in action to solve problems. These processes comprise a mutually reinforcing system that continually deepens the company’s capabilities. Toyota’s most important accomplishment, writes Shook, is how the company “learned to learn”; its disciplined processes give rise to a culture of learning, which in turn reinforces the processes.

Think of it as a way of engaging everyone as a learner – even people in jobs characterized by what other organizations might view as mindless repetition. When everyone is a learner, even the most routine work is no longer routine. As Toyota researchers Steven Spear and Kent Bowen put it, “the system actually stimulates workers and managers to engage in the kind of experimentation that is widely recognized as the cornerstone of a learning organization” (‘Decoding the DNA of the Toyota Production System’, Harvard Business Review, September-October 1999). They argue

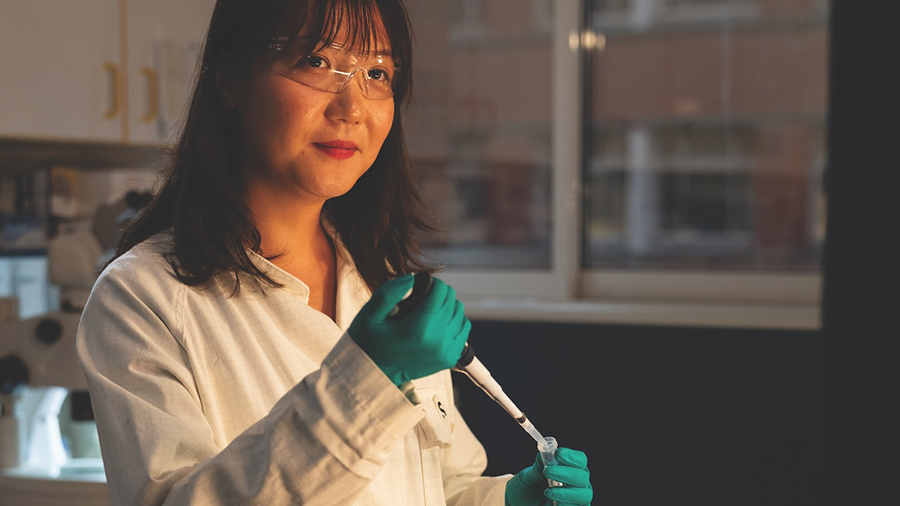

that Toyota’s rigorous system “creates a community of scientists.” Scientists in a production plant? Yes. The curiosity and systematic approach that characterize successful scientists is abundantly present at Toyota.

Some of the more visible tools of Toyota’s learning system are standardized work, checklists, and jidoka, which means stopping work immediately whenever an abnormality occurs. Each of these tools helps prevent basic failures. Tools like jidoka and checklists make it easy to do the right thing and difficult to do the wrong thing.

Under the Toyota Production System (TPS), standardized work – a formal list of steps for a job – applies to every job, allowing everyone, not just engineers, to participate in process improvement. Importantly, the guidelines are created by those who do the work, and revised frequently as more is learned.

Some might consider standardized work an enforcement tool that restricts people from thinking, yet in practice at Toyota and Virginia Mason, it allows the opposite. Rather than precluding creativity, standardized work clarifies the status quo and exposes its flaws. When people follow procedures consistently, problems become readily visible, allowing people to solve them quickly. Otherwise, it’s hard to tell if a deviation signals a problem, or merely reflects the different choices of workers.

Toyota’s core practices prevent basic failures like saddling a customer with a defective product, support continuous improvement, and create enormous economic value. Similarly, Dr Peter Pronovost developed a checklist for doctors and nurses in the intensive care unit at Johns Hopkins Hospital in Baltimore to “ensure against the haste and the memory slips endemic to human error”. Doctors and nurses in Michigan followed his checklist – and over the course of 18 months, saved 1,500 lives and $100 million for the state.

Learning from the occasional happy mistake

Any exploration of value created by failure prevention would be incomplete without a mention of the rare mistake that delivers happy results. For example, a popular burnt caramel ice cream flavor at Toscanini’s Ice Cream in Cambridge, Massachusetts, was born of a kitchen blunder. Ice cream maker Adam Simha was talking with local chef Bruce Frankel while melting sugar over a flame to form a caramel base. When Simha got distracted and burned the caramel, Frankel urged him not to start over but instead to experiment with the unintended result. The result tasted like crème caramel and has proved to be one of the store’s most successful flavors.

Or what about Lee Kum Sheung, who mistakenly left a pot of oysters on the stove too long, working in a small restaurant in Guangdong in South China back in 1888. To his horror, he found his pot covered in a sticky brown goo. Distraught but curious, Lee tasted the result of his mistake – and discovered that it was delicious. Soon he was selling his “oyster sauce” under the Lee Kum Kee brand, and, more than 130 years later, Lee’s error had made his heirs worth more than $17 billion. What such happy accidents have in common with TPS is approaching mistakes with curiosity.

Yet for every burnt caramel story, countless prevented failures deliver unheralded value that is impossible to quantify. Amazon, understanding this, adopted a Toyota-inspired mechanism to shut down any web pages with products that could not be delivered flawlessly, enabling it to catch small mistakes before they turned into basic or complex failures. The company also instituted blameless reporting. This practice helped coder Beryl Tomay succeed, despite a highly consequential mistake in her first year at Amazon, when she inadvertently shut down the order-confirmation page for an hour – an expensive failure.

After discovering and correcting the glitch, the company led a review process where Tomay detailed the process that led to the failure. Today she oversees business and technology at the company’s “last mile” delivery unit and exemplifies the company’s ethos to adapt and move on, saying: “Every year, I’m learning something new.”

A culture of learning

At Toyota and elsewhere, learning practices thrive amidst a learning culture that appreciates people for speaking up about problems. Unlike workplaces that blame by default and look to find the culprit when things go wrong, a culture that makes discovery and learning the natural way of things is vital to preventing basic failures. This is the least celebrated part of failing well. Teams in such organizations have the psychological safety they need to speak up quickly, without fear, when they see a problem – while team psychological safety also enables the small experiments that support continuous improvement.

Failing well

Failing well in a complex and uncertain world means pursuing thoughtful risks in new territory, where success is not a guarantee. Some experiments will immediately pay off in the form of new discoveries, game-changing innovations, or successful business models. Others will disappoint. Learning to embrace intelligent failures with curiosity and a determination to use their lessons wisely is vital to the long-term success of any company or career. But it’s just as important to be vigilant and curious in familiar territory – to employ the discipline of a learning mindset to prevent the wasteful failures that give rise to cynicism and apathy. Doing so also brings untold economic and human benefits.